[ad_1]

Researchers from College of California San Diego have discovered a strategy to distinguish amongst hand gestures that individuals are making by inspecting solely knowledge from noninvasive mind imaging, with out data from the arms themselves. The outcomes are an early step in growing a non-invasive brain-computer interface that will sooner or later enable sufferers with paralysis, amputated limbs or different bodily challenges to make use of their thoughts to regulate a tool that assists with on a regular basis duties.

The analysis, lately revealed on-line forward of print within the journal Cerebral Cortex, represents the perfect outcomes up to now in distinguishing single-hand gestures utilizing a very noninvasive approach, on this case, magnetoencephalography (MEG).

“Our aim was to bypass invasive elements,” stated the paper’s senior creator Mingxiong Huang, PhD, co-director of the MEG Middle on the Qualcomm Institute at UC San Diego. Huang can be affiliated with the Division of Electrical and Laptop Engineering on the UC San Diego Jacobs College of Engineering and the Division of Radiology at UC San Diego College of Medication, in addition to the Veterans Affairs (VA) San Diego Healthcare System. “MEG offers a secure and correct choice for growing a brain-computer interface that might finally assist sufferers.”

The researchers underscored the benefits of MEG, which makes use of a helmet with embedded 306-sensor array to detect the magnetic fields produced by neuronal electrical currents transferring between neurons within the mind. Alternate brain-computer interface methods embody electrocorticography (ECoG), which requires surgical implantation of electrodes on the mind floor, and scalp electroencephalography (EEG), which locates mind exercise much less exactly.

With MEG, I can see the mind pondering with out taking off the cranium and placing electrodes on the mind itself. I simply should put the MEG helmet on their head. There aren’t any electrodes that might break whereas implanted inside the pinnacle; no costly, delicate mind surgical procedure; no doable mind infections.”

Roland Lee, MD, examine co-author, director of the MEG Middle on the UC San Diego Qualcomm Institute, emeritus professor of radiology at UC San Diego College of Medication, and doctor with VA San Diego Healthcare System

Lee likens the security of MEG to taking a affected person’s temperature. “MEG measures the magnetic power your mind is placing out, like a thermometer measures the warmth your physique places out. That makes it fully noninvasive and secure.”

Rock paper scissors

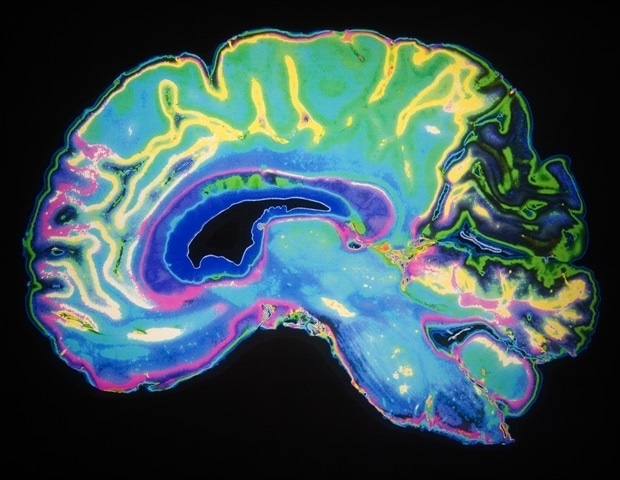

The present examine evaluated the flexibility to make use of MEG to differentiate between hand gestures made by 12 volunteer topics. The volunteers had been geared up with the MEG helmet and randomly instructed to make one of many gestures used within the recreation Rock Paper Scissors (as in earlier research of this sort). MEG purposeful data was superimposed on MRI photos, which supplied structural data on the mind.

To interpret the information generated, Yifeng (“Troy”) Bu, {an electrical} and laptop engineering PhD pupil within the UC San Diego Jacobs College of Engineering and first creator of the paper, wrote a high-performing deep studying mannequin known as MEG-RPSnet.

“The particular characteristic of this community is that it combines spatial and temporal options concurrently,” stated Bu. “That is the principle motive it really works higher than earlier fashions.”

When the outcomes of the examine had been in, the researchers discovered that their methods might be used to differentiate amongst hand gestures with greater than 85% accuracy. These outcomes had been similar to these of earlier research with a a lot smaller pattern dimension utilizing the invasive ECoG brain-computer interface.

The workforce additionally discovered that MEG measurements from solely half of the mind areas sampled might generate outcomes with solely a small (2 – 3%) lack of accuracy, indicating that future MEG helmets may require fewer sensors.

Trying forward, Bu famous, “This work builds a basis for future MEG-based brain-computer interface improvement.”

Supply:

Journal reference:

Bu, Y., et al. (2023) Magnetoencephalogram-based brain-computer interface for hand-gesture decoding utilizing deep studying. Cerebral Cortex. doi.org/10.1093/cercor/bhad173.

[ad_2]